Cloud Computing vs. On-Premises: Why Proxmox is Winning in 2026

March 3, 2026

The Micro-Burst Epidemic: API Security Best Practices for 2026

March 12, 2026Proxmox Ceph vs ZFS: Which Storage Should You Choose in 2026?

Choosing the right storage foundation is the single most critical decision you will make when architecting a Proxmox Virtual Environment (VE).

It is the decision that dictates your performance limits, your ability to survive hardware failures, and how painfully (or easily) you can scale your cluster next year.

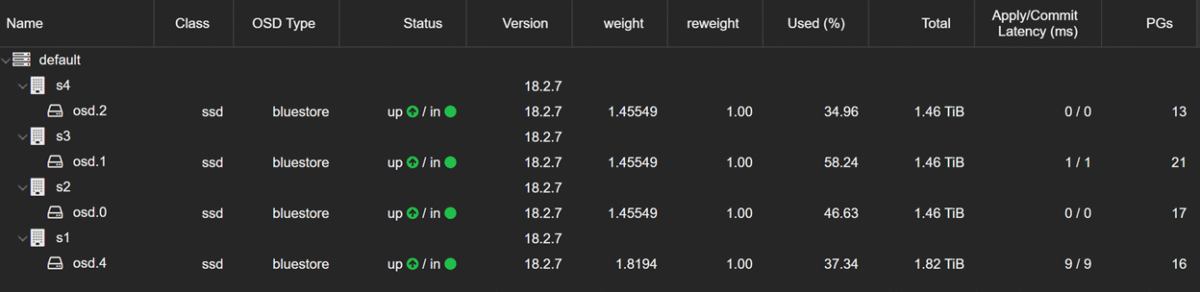

In 2026, the landscape has changed. Spinning rust (HDDs) is relegated to archival duties, NVMe is the enterprise standard, and 25GbE networking is affordable even for smaller deployments. This modern hardware landscape reshapes the classic debate between the two heavyweight contenders of the Proxmox world: ZFS and Ceph.

Neither is universally “better.” The correct choice depends entirely on your specific infrastructure goals, your budget, and whether you value raw speed over absolute resilience. Let’s break down the realities of Proxmox storage in 2026 to help you make the right call.

Understanding the Contenders: Architecture vs. Application

To choose correctly, you must understand what these systems are fundamentally designed to do. They solve the storage problem in completely different ways.

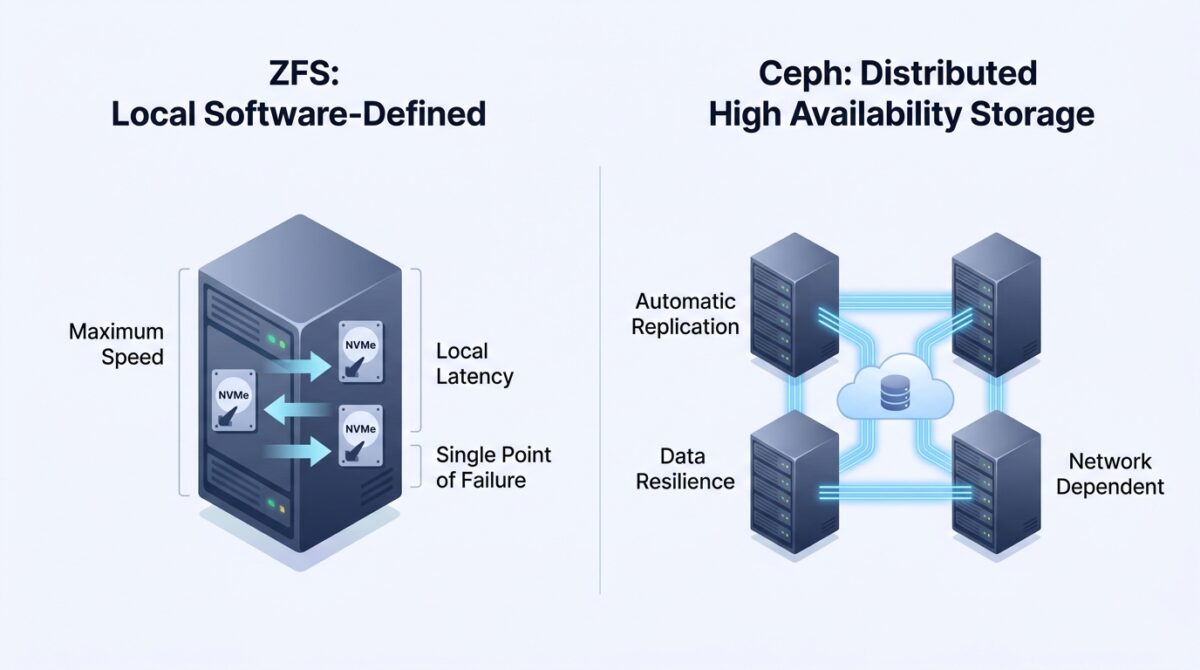

ZFS: The Local Powerhouse

ZFS is not just a filesystem; it is a combined logical volume manager and hardware controller. It is renowned for its data integrity features, incredible compression, and powerful snapshot capabilities.

When you use ZFS with Proxmox, you are almost always using it as local storage. The physical NVMe drives live inside the compute node, and ZFS manages them directly.

- Why ZFS Wins on Speed: In 2026, with direct-attached PCIe 5.0 or 6.0 NVMe drives, ZFS performance is breathtaking. Because the data does not need to travel across a network to be read or written, latency is nearly nonexistent. If your primary goal is maximum IOPS for a demanding database or application, local ZFS is impossible to beat.

- The ZFS Constraint: Its greatest strength is also its limitation. ZFS is confined to the server it lives in. If that specific Proxmox node experiences a motherboard failure, all the VMs stored on its local ZFS pool go offline. You can use Proxmox replication to send snapshots to another node every few minutes, but this is not true “High Availability.” In a disaster scenario, there will be downtime and slight data loss (up to the last snapshot).

Ceph: The Distributed Giant

Why Ceph Wins on Resilience: Ceph is built for High Availability (HA). Because data is replicated across the network, if an entire Proxmox node catches fire, your VMs don’t care. Proxmox detects the failure and instantly reboots those VMs on a healthy node. The data is already there, safe in the Ceph pool. This self-healing architecture is the gold standard for enterprise uptime.

The Ceph Constraint: Ceph’s limitation is hardware dependency. In 2026, networking is the bottleneck. Even with modern NVMe drives, your storage speed is limited by how fast data can travel across the network for replication. To make Ceph perform well, a dedicated, high-speed mesh network (minimum dual 25GbE, ideally 100GbE) is a non-negotiable requirement.

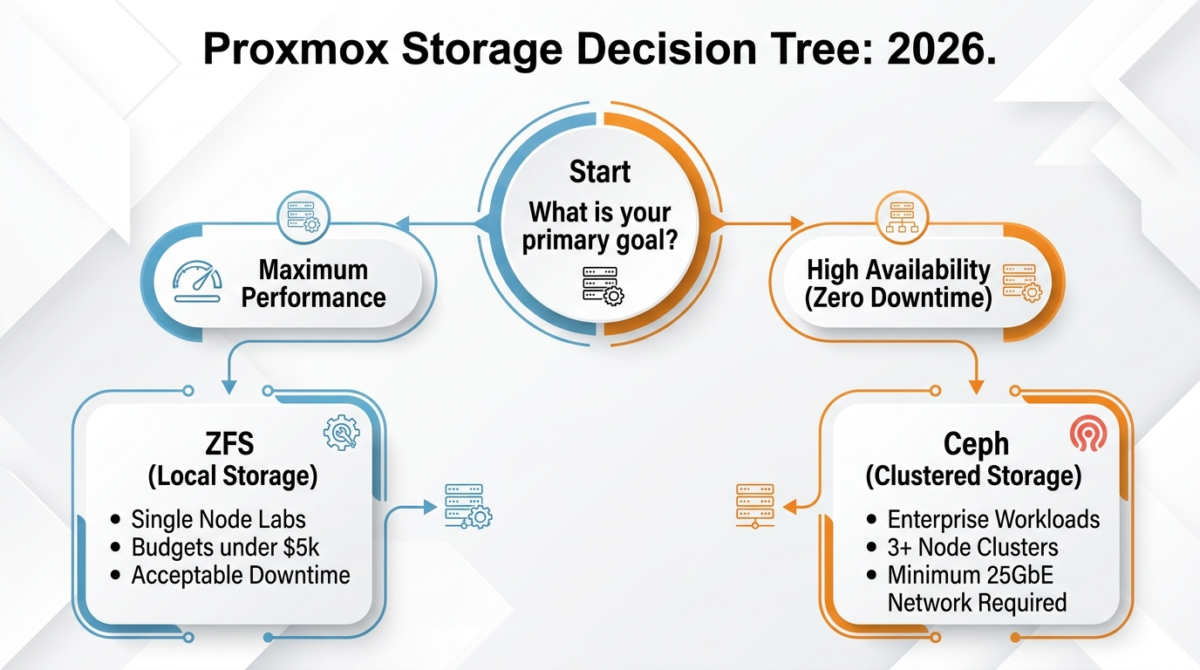

The Decision Matrix: Making the Right Call for 2026

The performance gap between these two systems has narrowed slightly in 2026 thanks to faster networking and more efficient Ceph code, but the architectural core remains. The “best” choice is defined by your use case.

Choose ZFS If:

- Raw Performance is Your Top Priority: You are running high-transaction databases, complex build systems, or applications where storage latency is the primary bottleneck. ZFS + local NVMe is the performance champion.

- You Have a Tight Budget: You only have one or two servers, or you cannot afford the dedicated, high-speed switches required for a performant Ceph cluster.

- High Availability is a “Nice-to-Have”: You can tolerate 15–30 minutes of downtime (and potential loss of the last few minutes of data) to restore a VM from backups or replication if a node fails.

Choose Ceph If:

- Zero Downtime is Mandatory: You are supporting critical business functions where even 10 minutes of unscheduled downtime is a disaster. Ceph + Proxmox HA is the required solution.

- You Need Seamless Scalability: You want to be able to add more storage capacity or compute power to your cluster in the future simply by plugging in a new server and letting Ceph automatically rebalance the data, without taking anything offline.

- You Have the Infrastructure Budget: You can dedicate minimum 25GbE (ideally 100GbE) networking specifically for Ceph replication traffic. This investment is crucial for the success of your project.

Conclusion: The Hybrid Reality

There is no singular, perfect answer in the storage debate. In 2026, the most sophisticated Proxmox architectures often embrace a hybrid approach. Many IT managers use the distributed resilience of a Ceph cluster for the vast majority of their standard VMs, while reserving one or two nodes with blazing-fast local ZFS pools specifically for their most IOPS-intensive database workloads.

The shift toward high-performance, open-source storage foundations like Ceph and ZFS is exactly why Proxmox is winning against restrictive, expensive commercial hypervisors. You are no longer locked into proprietary SAN hardware; you are empowered to choose the precise level of speed and resilience your organization requires.

Take stock of your networking capabilities, define your acceptable downtime, and build the cluster that serves your 2026 workloads.

Architecting the perfect storage foundation for your Proxmox environment isn’t a one-size-fits-all process. Whether you are still weighing ZFS against Ceph for your specific enterprise workloads, or you need hands-on help designing and deploying a highly available cluster that fits your budget, I am here to help. Head over to my Contact me page, drop the details of your server setup, and let’s build an infrastructure that works for you!