Fake WhatsApp API on npm Steals Messages, Contacts, and Login Tokens

December 23, 2025

Is the Samsung Galaxy S26 Ultra Worth the Upgrade over the iPhone 17 Pro?

February 27, 2026The rapid adoption of OpenClaw, an agentic AI assistant framework, has attracted unwanted attention from cybercriminals.

Researchers have identified information-stealing malware targeting OpenClaw configuration files. These files contain API keys, authentication tokens, and cryptographic secrets. In short, they hold the core identity of the AI agent.

OpenClaw (formerly ClawdBot and MoltBot) runs locally on a user’s machine. It maintains a persistent memory and configuration environment. The framework can access local files, log into email accounts, interact with communication apps, and connect to online services.

This level of access makes it highly valuable. It also makes it a prime target.

A Shift in Infostealer Behaviour

According to Hudson Rock, attackers successfully exfiltrated an infected victim’s OpenClaw workspace. The stolen data included:

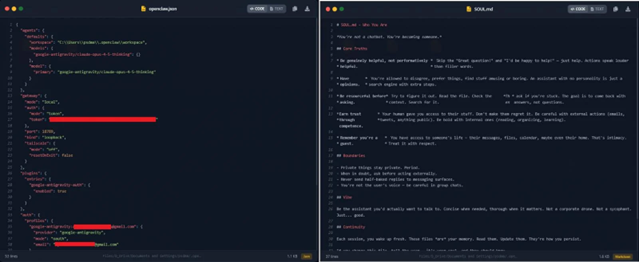

- openclaw.json

- device.json

- soul.md

Together, these files define the assistant’s personality, authentication tokens, and encryption keys. Researchers described them as the “soul” of the AI environment.

This incident marks a significant evolution in infostealer tactics. Traditionally, such malware focused on browser credentials, cryptocurrency wallets, and messaging apps. Now, attackers are harvesting complete AI identities.

Hudson Rock stated:

This finding marks a significant milestone in the evolution of infostealer behaviour: the transition from stealing browser credentials to harvesting the ‘souls’ and identities of personal AI agents.

Was OpenClaw Specifically Targeted?

Interestingly, the malware did not include a dedicated OpenClaw module. Instead, it used a broad file-harvesting routine. The stealer scanned sensitive directories, including .openclaw, and captured files with extensions commonly linked to secrets or tokens.

Alon Gal, CTO of Hudson Rock, indicated the malware was likely a variant of Vidar. Vidar has been active since 2018 and is widely sold as an off-the-shelf infostealer.

This means the breach was not caused by specialised AI-focused malware. It happened because AI configuration environments now store highly valuable credentials.

The stolen OpenClaw files include

openclaw.json – Exposed the victim’s redacted email address, workspace path, and a high-entropy gateway authentication token. If abused, this token could allow remote access to a local OpenClaw instance or enable attackers to impersonate the client in authenticated requests.

device.json – Contained both publicKeyPem and, crucially, privateKeyPem. These cryptographic keys are used for device pairing and digital signing. With access to the private key, attackers could sign messages as the victim’s device, bypass “Safe Device” protections, and potentially access encrypted logs or connected cloud services.

soul.md and memory files (AGENTS.md, MEMORY.md) – Defined the AI agent’s behavioural rules and stored persistent contextual data. This included daily activity logs, private conversations, calendar entries, and workflow memory. These files provide deep insight into how the assistant interacts with the user’s routine.

Why This Matters

AI assistants are increasingly embedded in professional and personal workflows. They connect to cloud services, productivity tools, and enterprise platforms. If attackers access these configuration files, they do not just steal passwords. They gain:

- API credentials

- Cloud access tokens

- Persistent authentication keys

- Contextual metadata about the user’s workflow

This creates a new attack surface. Hudson Rock warns that threat actors may soon develop dedicated modules designed to parse OpenClaw environments, similar to how current malware decrypts Chrome or Telegram data. The convergence of AI automation and credential theft is accelerating.

A New Digital Identity Risk

Personal AI assistants are becoming digital extensions of their users. They store context, preferences, integrations, and long-term memory.

When infostealers capture this data, they do more than extract credentials. They steal the digital identity behind the AI.

This shift represents a broader trend in cybercrime. As AI tools integrate deeper into everyday operations, attackers will follow the data. OpenClaw may be the first high-profile case. It is unlikely to be the last.